Understanding how API rate limiting works is essential for maintaining the stability and security of modern microservices architectures in 2026. Without robust traffic throttling, a single runaway script or a malicious DDoS attack can quickly exhaust your server resources, leading to cascading system failures. By implementing intelligent rate limiting algorithms at the API gateway level, engineering teams can enforce fair usage policies, protect backend databases, and provide a consistent developer experience for legitimate users while automatically blocking abusive traffic.

In the interconnected digital landscape of 2026, Application Programming Interfaces (APIs) are the primary targets for automation—both good and bad. While legitimate developers use APIs to build innovative applications, malicious actors use them for scraping data, brute-forcing credentials, or overwhelming infrastructure.

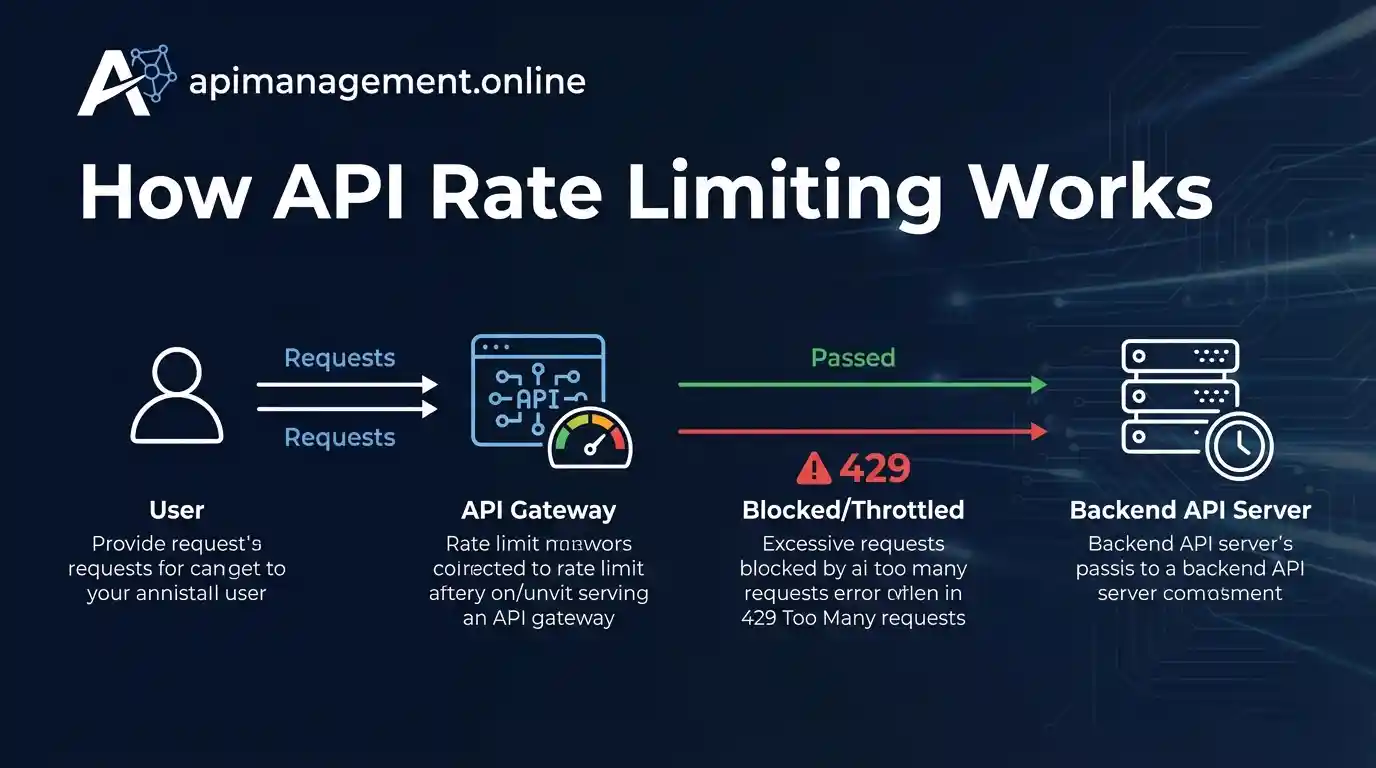

API rate limiting acts as the “traffic cop” of your digital ecosystem. It is a technical strategy that restricts the number of requests a client (identified by IP address, API key, or user ID) can make within a specific timeframe. When a client exceeds their allotted quota, the system returns an HTTP 429 Too Many Requests status code, preserving your system’s integrity.

The Fundamental Mechanics of Throttling

To implement rate limiting effectively, an API gateway or management platform must track three core variables for every incoming request:

- The Identifier: Who is making the request? This is typically tracked via source IP address, a unique API key, or a JWT user claim.

- The Threshold: How many requests are allowed? For example, “100 requests per minute.”

- The Window: Over what period is the limit measured? This could be per second, per minute, or even per day.

Layered Throttling Strategies

In 2026, mature organizations do not rely on a single global limit. Instead, they use a layered approach:

- Edge-Level Limiting: Enforced at the CDN (like Cloudflare) to block obvious volumetric floods before they reach your origin servers.

- Gateway-Level Limiting: Enforced by tools like Kong or Tyk to apply business logic, such as tiered billing (e.g., Free users get 10 req/sec while Pro users get 100 req/sec).

- Resource-Based Limiting: Specific limits for expensive endpoints, such as a PDF generator or a complex AI inference call, to prevent database exhaustion.

Core Rate Limiting Algorithms in 2026

Choosing the right algorithm is critical. Each has technical trade-offs regarding memory consumption, accuracy, and how they handle “bursty” traffic.

1. Token Bucket

Tokens are added to a virtual “bucket” at a steady rate. Each request consumes one token. If the bucket is empty, the request is rejected. Why it’s popular: It allows for occasional bursts of traffic as long as the bucket has accumulated tokens, making it ideal for standard web applications.

2. Leaky Bucket

Requests enter a bucket with a hole in the bottom. Traffic “leaks” out to the backend at a constant, fixed rate. If the bucket overflows, new requests are discarded. Why it’s popular: It smooths out bursty traffic into a perfectly steady stream, protecting fragile legacy backend systems.

3. Fixed Window vs. Sliding Window

Fixed Window Counter is the simplest to code: it resets every 60 seconds. However, it is vulnerable to a “burst” at the edge of the window (e.g., 100 requests at 11:59:59 and another 100 at 12:00:01).

Sliding Window Log/Counter algorithms solve this by looking at the last 60 seconds dynamically, providing a much fairer and more accurate enforcement of the limit, though they require more memory to track individual request timestamps.

Security Warning: The Cost of Improper Throttling

Failure to implement rate limiting on sensitive endpoints like /login or /password-reset is a top vulnerability in the OWASP API Security Top 10. Attackers use automated tools for credential stuffing; without rate limiting, they can attempt millions of logins per hour without consequence.

Advanced Patterns: Complexity-Based Throttling

In 2026, “one request equals one point” is becoming obsolete. Modern API management platforms use complexity-based throttling. For example, a simple GET request for a user profile might cost 1 point, but a massive POST request that triggers a machine learning model might cost 50 points.

This ensures that users are limited based on the actual computational load they place on your infrastructure, rather than just the raw number of network calls they make. This is particularly crucial for companies offering Generative AI APIs, where inference costs can vary wildly based on the length of the prompt.

Our Commitment to Transparent Reviews

At API Management Online, we believe architectural decisions should be driven by technical facts, not marketing hype. To maintain your trust, we operate with complete transparency:

- No Software Sales: We are a technical media and education platform. We do not sell API gateways, software licenses, or consulting services. We will never ask for your credit card or PayPal information.

- Analytics Usage: We utilize Google Analytics to monitor aggregated, anonymized user traffic. This helps us understand which technical topics (like rate limiting algorithms or Kubernetes security) are most valuable to our community.

- Display Advertising: To keep our high-quality guides free and cover our operational costs, we display programmatic ads using Google Ads. Third-party vendors use cookies to serve ads based on your digital footprint. You can opt out via your Google settings at any time.

If you have questions about which rate limiting strategy fits your specific stack, reach out via our secure Contact Page.

Frequently Asked Questions (FAQ)

What is the difference between Rate Limiting and Throttling?

While often used interchangeably, rate limiting refers to the hard cap on requests (returning an error), while throttling can also mean slowing down the response time to discourage the client from making more requests without failing them entirely.

How should clients handle a 429 Too Many Requests error?

Best practice dictates that the API should return a Retry-After header. Clients should implement Exponential Backoff with Jitter—waiting an increasing amount of time before retrying to prevent “thundering herd” scenarios that could further destabilize the server.

Can I implement rate limiting without an API Gateway?

Yes, you can implement it directly in your application code (e.g., using Redis counters). However, this consumes backend CPU resources to process invalid requests. An API gateway is more efficient because it drops the traffic at the edge before it ever reaches your application.

Does rate limiting protect against SQL Injection?

No. Rate limiting only controls the volume of traffic. To protect against malicious payload contents like SQL Injection or XSS, you must implement a Web Application Firewall (WAF) or payload validation policies alongside your rate limits.